Today, OpenAI announced Frontier—a new enterprise platform for building, deploying, and managing AI agents that can do real work across an organization. The announcement is significant not just for what it enables, but for what it reveals about where enterprise AI is heading.

The most telling part of the Frontier architecture isn’t the agents themselves. It’s what sits beneath them: a foundational Business Context layer that connects enterprise data, systems, and workflows into a shared knowledge base that every agent can reason over.

In our recent piece on Context Is the Product, we explored why clean, structured knowledge layers—not models—are what separate agents that work from agents that fail. Frontier takes this architectural principle and makes it the foundation of an entire enterprise platform.

What OpenAI Frontier Actually Is

Frontier is OpenAI’s play to move beyond API access and into the enterprise application stack. Rather than just providing models, Frontier gives organizations a platform to build, run, and manage AI agents that operate across their business systems.

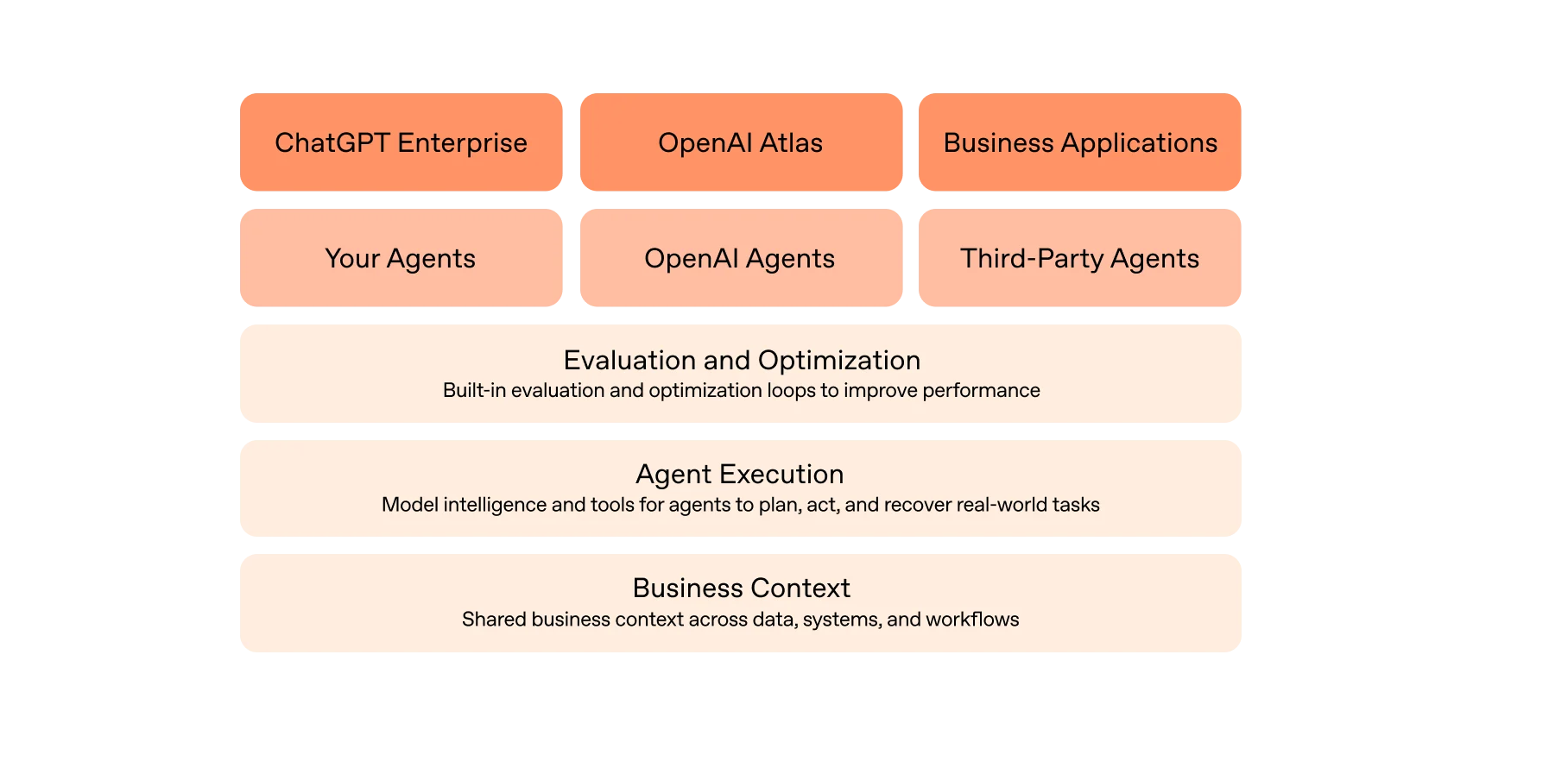

The platform is built around a three-layer architecture:

- Business Context — Shared business context across data, systems, and workflows. This is the foundational layer that connects to your systems of record and makes institutional knowledge available to every agent.

- Agent Execution — Model intelligence and tools for agents to plan, act, and recover across real-world tasks. Supports parallel agent execution for complex workflows.

- Evaluation and Optimization — Built-in evaluation and optimization loops that show what’s working and what isn’t, so agents improve with experience.

Crucially, Frontier is designed as a multi-vendor platform. It supports agents that OpenAI builds, agents that enterprises build themselves, and agents from third parties—including Google and Anthropic. The interfaces layer spans ChatGPT Enterprise, OpenAI Atlas, and custom business applications. Enterprise security and governance are built in for sensitive and regulated work.

As OpenAI put it: “What’s slowing enterprises down isn’t model intelligence—it’s how agents are built and run.”

The Business Context Layer: Why It Matters

The Business Context layer is the most architecturally significant piece of Frontier. It connects to enterprise systems of record—data warehouses, CRMs, ERPs, ticketing systems, and internal applications—and creates what OpenAI describes as a “semantic layer for the enterprise.”

This is the productized version of what practitioners have been calling the “knowledge layer”—all of a company’s data normalized into clean, structured, searchable formats that agents can reason over effectively. Instead of each agent needing its own bespoke data pipeline, Business Context provides a shared foundation that any agent can access.

The design choice here is deliberate. Rather than requiring organizations to consolidate data into a single repository, Frontier connects to information where it already lives. Agents access data warehouses, CRM platforms, ticketing tools, and internal applications through unified connectors. Over time, agents build persistent memory—turning past interactions into useful context that improves their performance.

This matters because it addresses the core bottleneck that has stalled most enterprise AI deployments. Organizations don’t fail at AI because the models aren’t capable enough. They fail because their agents lack the business context to make informed decisions. An agent without access to your company’s institutional knowledge, customer history, and operational data is just a generic chatbot with a corporate email address.

OpenAI’s Own Proof Point: The Internal Data Agent

Frontier didn’t emerge in a vacuum. Before productizing the Business Context layer for enterprises, OpenAI built this architecture internally—and the results are instructive.

OpenAI’s in-house data agent handles natural language queries across 600+ petabytes of data spanning approximately 70,000 datasets. The architecture reveals how seriously OpenAI takes the context problem:

The system connects an Agent-API to two distinct data layers. An Internal Data Knowledge Base combined with Company Context from tools like Slack, Google Docs, and Notion provides pre-processed offline context. Meanwhile, a Data Warehouse and Data Platform Sources (Spark, Airflow, metadata services) handle online synchronous calls for live data. Multiple entry points—Agent-UI, Local Agent-MCP, Remote Agent-MCP, and a Slack Agent—feed into the central API.

OpenAI’s own blog makes the core insight explicit:

“Context is everything. High-quality answers depend on rich, accurate context. Without context, even strong models can produce wrong results, such as vastly misestimating user counts or misinterpreting internal terminology.”

The data agent uses a multilayered approach to context: table usage metadata with deep schema understanding, human-provided annotations from domain experts, code-level definitions extracted from the codebase, institutional knowledge from internal documentation, a memory system that stores non-obvious corrections, and runtime context that inspects live schemas.

The lesson is striking. Even OpenAI—the company with the most advanced models in the world—needed to invest heavily in building a comprehensive knowledge layer before their own agents could deliver reliable, accurate results. If the organization that builds frontier models can’t skip the knowledge layer, no one can.

Agents Are Getting Smarter—Fast

The Frontier announcement doesn’t exist in isolation. It arrives alongside a rapid acceleration in model capabilities that makes enterprise agent deployment both more viable and more urgent.

Today alone saw the release of Claude Opus 4.6 from Anthropic—featuring a 1 million token context window, adaptive thinking that scales reasoning to task complexity, and the highest score on agentic coding benchmarks (Terminal-Bench 2.0). On knowledge work specifically, Opus 4.6 leads by 144 Elo points over GPT-5.2 across finance, legal, and technical domains. OpenAI simultaneously released GPT-5.3 Codex, their most capable agentic coding model—25% faster than its predecessor and the first model that was instrumental in creating itself.

The implication for enterprises is straightforward: agents are becoming dramatically more capable with each model generation. They can handle longer contexts, reason over more complex problems, and execute multi-step workflows with greater reliability. But that capability only compounds when agents have the right business context to work with. A more intelligent agent without access to your institutional knowledge is just a faster way to get generic answers.

OpenAI’s Strategic Move Up the Enterprise Stack

Frontier represents a fundamental strategic shift for OpenAI—from API provider to enterprise platform company. This is a move that puts OpenAI in direct competition not just with other model providers, but with established enterprise software vendors like Salesforce, ServiceNow, and Workday.

The competitive implications are significant. If Frontier agents can execute sales workflows, process customer requests, and manage operations autonomously, the traditional per-seat licensing model that underpins enterprise SaaS starts to look vulnerable. Why pay for 500 Salesforce licenses when agents handle much of the workflow?

But OpenAI is positioning Frontier as integration-first rather than replacement. As they put it: “We’re not going to build everything ourselves. We are going to be working with the ecosystem.” The multi-vendor agent support—your agents, OpenAI’s agents, and third-party agents from companies like Google and Anthropic—signals a platform play, not a lock-in play. Frontier aims to be the orchestration layer that sits above existing systems of record, not a replacement for them.

Early adoption from Fortune 500 companies—HP, Intuit, Oracle, Uber, and State Farm among the launch customers—suggests the enterprise appetite for this kind of platform is real.

Early Enterprise Results

The early numbers from Frontier deployments illustrate what becomes possible when agents operate with proper business context:

- Manufacturing: A major manufacturer reduced production optimization work from six weeks to one day using Frontier agents.

- Financial services: A global investment company deployed agents across the sales process, freeing up over 90% of salespeople’s time to spend with customers.

- Energy: A large energy producer used agents to increase output by up to 5%, translating to over $1 billion in additional revenue.

These aren’t incremental improvements. They represent step-function changes in operational efficiency—the kind of results that only become possible when agents have deep, structured access to business context and can act on it autonomously.

The Bottom Line

Frontier formalizes what practitioners have been learning through building production agents: the knowledge layer isn’t an afterthought—it’s the foundation. OpenAI has made Business Context the bottom layer of their entire enterprise platform, sitting beneath agent execution, evaluation, and every interface.

The organizations that will benefit most from platforms like Frontier are those that have already done the work of organizing, normalizing, and structuring their internal data. Clean context layers, well-documented institutional knowledge, and structured access to business systems—these are the prerequisites that determine whether enterprise agents deliver transformative results or just expensive mediocrity.

As agents become more capable with each model generation, and as platforms like Frontier make deployment increasingly accessible, the differentiator is no longer which model you use or which platform you choose. It’s the quality of your business context—the proprietary institutional knowledge, processes, and data that no competitor can replicate.

At IntelligentNoise, we build the full agent stack—from the knowledge layers that organize your company’s data into clean, structured context, to the agents and workflows that operate on top. If you’re ready to build the foundation for enterprise AI, book a strategy call to discuss your agent architecture.